Higher education is asking the right urgent question: “What should we do next with AI?”

But many institutions are still missing the more important one: “Is our data architecture and operating model ready for what AI can already do today – or are we still dealing with underlying data fragmentation?”

The Evolution of AI in Higher Ed

For a long time, skepticism about AI in analytics was reasonable. Language models could write, summarize, and mimic expertise, but they were not reliable engines for structured analysis on their own.

That is no longer the case.

Today, AI can increasingly work with tools, calculations, retrieval, and enterprise data. In practical terms, that means AI can begin to function like an analyst over trusted institutional data – not perfectly, not without governance, and not without human judgment, but enough to change how institutions should think about reporting, insight, and decision-making.

Data Unification: The Barrier to AI

At Jax Consulting, our AI & Data specialists see the same pattern across higher education. The main barrier is usually not lack of interest in AI. It is the lack of unification and the underlying data fragmentation that comes with it.

Most institutions still separate the two things modern analytics needs most:

- Treatment data: campaigns, application behavior, communications, interventions, funnel activity

- Outcome data: enrollment, retention, student success, academic outcomes, financial outcomes

Those datasets often live in different systems, under different owners, with different logic, and sometimes under vendors that make integration difficult. That fragmentation is no longer just a reporting inconvenience. It is now a strategic constraint.

Breaking Down Data Silos

If you cannot connect actions to outcomes, you cannot fully understand what worked, what changed behavior, which interventions matter, or which questions deserve action.

And that is exactly why data unification has become so central to the conversation.

The Limits of Traditional Analytics in Higher Education

The old analytics operating model is starting to break down. For years, institutions paid analysts to anticipate what leaders might want to know, then build reports, dashboards, and pipelines in advance.

That model made sense when every important question had to become a reporting request. But it is expensive, creates latency, and leads to dashboard sprawl. It ultimately turns high-value analysts into manual answer factories.

The Future of AI-Powered Analytics

The new model looks different.

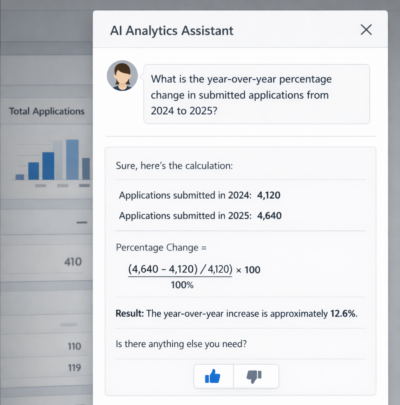

When data is unified and governed, stakeholders can increasingly ask questions directly in natural language, inside the systems where work already happens.

Instead of waiting on a reporting queue, they can ask:

- What is the year-over-year change in submitted applications?

- Which outreach patterns correlate with completed applications?

- Where are we investing effort without measurable movement?

- Which signals suggest a student is at risk of dropping out?

The Evolving Role of Analysts

The new model does not eliminate analysts. It elevates them.

The analyst of the next few years should spend less time building static reporting for hypothetical questions and more time on data design, semantic consistency, governance, experimentation, and interpretation.

AI Adoption Challenges in Higher Ed

But there is another mistake institutions are making. Many recognize that AI matters, then try to solve readiness through one new hire, one new title, or one overstretched office expected to fix reporting, clean data, modernize systems, and lead AI adoption all at once.

That is not transformation. That is role overloading. AI readiness cannot be hired as a title if it has not been built as a capability.

Building a Center for Enablement

Capability does not come from technology alone. It also comes from enablement.

That is where a Center for Enablement mindset becomes so important. Higher education needs a function, team, or operating model that helps people understand where trusted data lives, what AI can answer well, how to ask better questions, what guardrails apply, and when deeper analysis is still required.

Because even when the platform exists, adoption stalls if users do not know how to work differently.

A Shift Toward Better Questions

AI does not remove the need for thinking. It raises the premium on better questions.

In the old model, institutions spent heavily to anticipate what stakeholders might someday want to know.

In the emerging model, the burden shifts upward. Leaders have to ask better questions, teams have to trust the data, and institutions have to stop treating AI as a tool rollout and start treating it as a redesign of how insight is generated and used.

How to Prepare for AI in Higher Education

So what should institutions do next?

- Unify treatment data and outcome data

- Reduce integration barriers

- Define trusted business logic

- Build an enablement function, not just an implementation plan

- Redesign analytics so the goal is not more dashboards, but faster, better, and more actionable questions

Data Unification Is the Key

The institutions that move ahead will not be the ones that talk about AI the most. They will be the ones that make their data usable, their systems connected, and their people ready to work in a new way.

AI isn’t the bottleneck. Data silos are.

Learn more about Jax Consulting AI services and higher education expertise.